So this is the first post for this blog. I thought to start off with something cool, something kinda fun, and not too difficult to get started.

One of my favorite subjects of all time in robotics is computer vision…well not directly a subject in robotics, but it’s coupled together. Anyways. I digress.

In this day and age, computer vision and perception is a very popular research topic, with computer scientists and roboticists working hard to figure out how to impliment state of the art algorithms that can reliably detect and recognize objects in very unstructured environments. A good source of information and papers on the subject can be found at here.

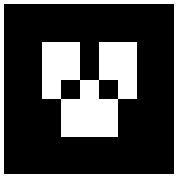

What I’ll be walking you through today is how to use a really cool library available that has a ROS (Robot Operating System) package named ar_track_alvar. This package can simplify a lot of the big questions and challenges that faces modern day high fidelity perception for robotic systems by the use of fiducials, or little pictures that can be used as points of references. These things are also called AR tags. Hence the name ar_track. AR tags look like little QR code squres, as seen below:

source: http://wiki.ros.org/ar_track_alvar

The real power of these tags is their square shape, that through “a priori” knowledge (stuff we know before hand), allows us to estimate their position and orientation with respect to a camera camera frame based off of their size in the image and their distortion. The library computes the projective transformations and spits out the tag’s position. Which is cool. So how do we do the thing?

I’m assuming that you clicked the ROS link up above and installed it, and did a few tutorials and are now a ROS master. First step is to install the package. Thankfully there is a Debian package for it, so it’s as easy as:

$ sudo apt-get install ros-[YOURDISTRO]-ar-track-alvar

I’m on kinetic, but if you’re on Ubuntu 14.04, you will have installed indigo, and you will use indigo in place of [YOURDISTRO].

Once installed you may need to run a quick rospack profile. Once that’s done, you will be able to use the package. Now that we have the package, let’s make our own AR Tag! To do that, you will either want to make a folder to keep all of your tags, or navigate to an existing one you want to output the images to. Once there, you will run:

$ rosrun ar_track_alvar createMarker 0

You should see a new file named MarkerData_0.png in your current directory. You can easily open the image from your terminal by running:

$ eog MarkerData_0.png

You should get an image that looks like the following pop up on your screen. It should also be noted that AR Tags have different patterns based off of the number you have requested. A zero will always look like a zero, a one will always look like a one and so on. This is really useful if you tag an object for a robot to interact with with a specific number. So you can reliably, and repeatably select the object again.

Another note on printing. You can select different sizes to create an AR Tag. SO if you wanted one really big one or several small one depending on your application, you can have that! It’s just important to note what size you printed it, because that’ll be important later. The default size that these tags are printed at are 9 units x 9 units. So for example. If you wanted to output a 3 tag that was 5cmx5cm, the syntax would be:

$ rosrun ar_track_alvar createMarker -s 5 7

Some advice: Due to differences in how you can format your printer/setup an image print or if you take a bunch of images and put them on a single page, it can cause some changes in the actual size of the tag. I would suggest that you still measure the printed tag just to be absolutely sure of the size of the tag, because that will be important to get an estimate of the tag’s position with respect to the camera. A tiny tag that isn’t known to be tiny to the software could think it’s really far away.

Anyways. Now onto a real test. I have an Xbox 360 Kinect, which I picked up for cheap from a “retro game shop” near my house. You can get your own for cheap too, OR use a single USB camera.

You can clone my example repo from here: https://github.com/atomoclast/ar_tag_toolbox

You will then need to put it into your catkin_ws or whatever other workspace you have specified and build/source it using:

$ catkin_make

$ source devel/setup.bash

If you’re really feeling adventurous, you can setup catkin_tools, but that’s a tutorial for another day.

For the Xbox 360, you will need a package called freenect to launch the hardware as a ROS Node. To test it you will plug in your respective camera/sensor, then launch their respective driver node.

For Kinect, in two separate terminals:

$ roslaunch freenect_launch freenect.launch

$ roslaunch ar_tag_toolbox ar_track_kinect.launch marker_size:=5

The only thing I would point out, is on the second line, we’re passing in a marker_size of 5. The unit is [cm], and that you should pass whatever the size of your target is.

Similarly, for a USB camera:

$ roslaunch ar_tag_toolbox usb_cam.launch cam_id:=0

$ roslaunch ar_tag_toolbox ar_track_usb_cam.launch marker_size:=5

Note the cam_id being defined. This is whatever the camera number you want to launch is. If you have a laptop with a builtin webcam, that will be 0, so a secondary USB camera is 1. The marker size is passed through as well.

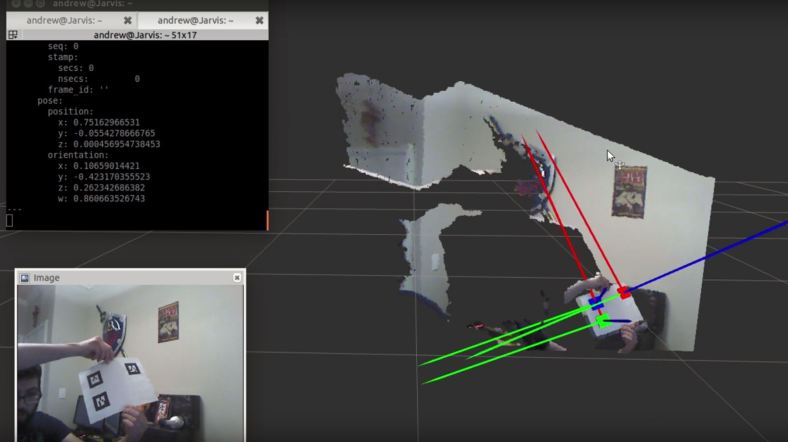

If all goes well, you end up with something like this:

As you can see in the video, the AR Tags are being tracked in space, with an RGB (xyz) Coordinate System applied to each square. Pretty cool!

Liked what you saw? Subscribe for more cool how-tos, side projects and robot stuff!

Have a comment, issue, or question? Leave a comment or message me on github.