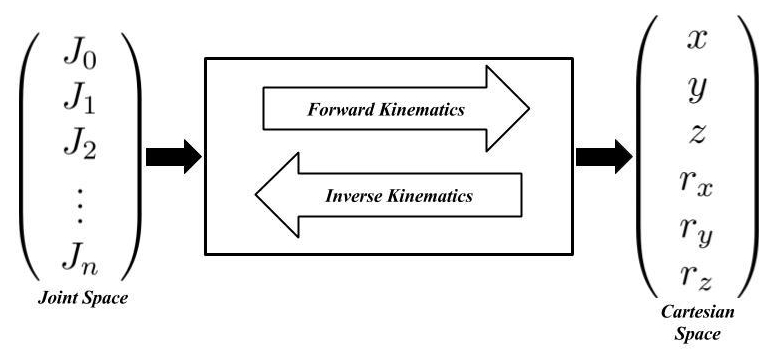

In my last post, we began to scrape the surface in robotic manipulators by discussing joint space, Cartesian space, and their intertwined relationship. The caveat to that analysis was that everything was static. We were solving for end effector position in space or for joint angles given a desired end effector. The issue is, robots have to move with respect to time. Thanks to our good friend Newton, we know as something changes its position with respect to time, we have a velocity.

This robot arm must move in a coordinated fashion to move the gripper into position to catch the object being thrown at it. Source: Swiss Federal Institute of Technology in Lausanne

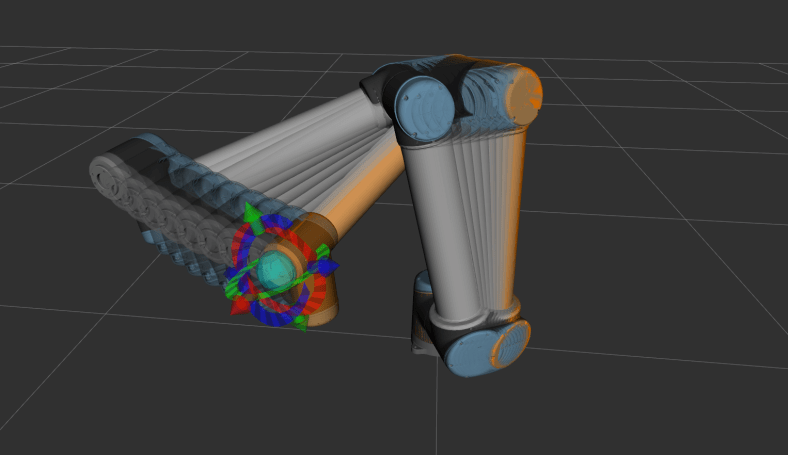

To make things interesting, sometimes we have constraints on the task at hand that requires to manipulator to move the end effector in a straight line in Cartesian space, think about a task like sliding open a window, or pulling a peg out of a hole like the robot below is about to do. That means just planning joint angles for a starting and ending position isn’t enough, we will need to use coordinated motion between the individual joints to get the resulting end effector to move along a specific path.

This robot will need to use Cartesian control to pull the peg out in the horizontal direction only. Source: MIT CSAIL

Team Jacobian: The Differential Relationship

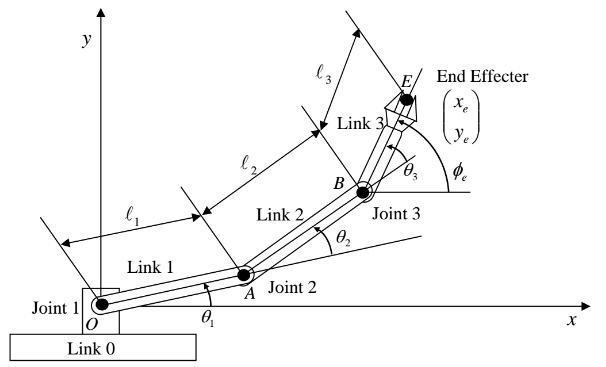

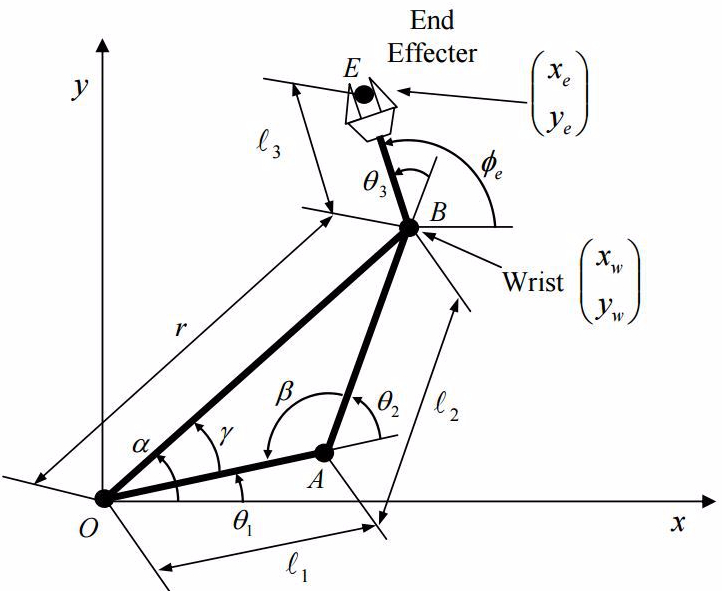

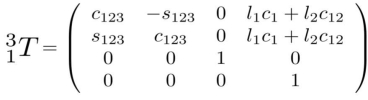

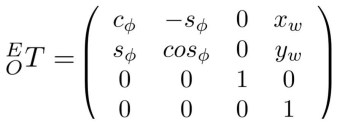

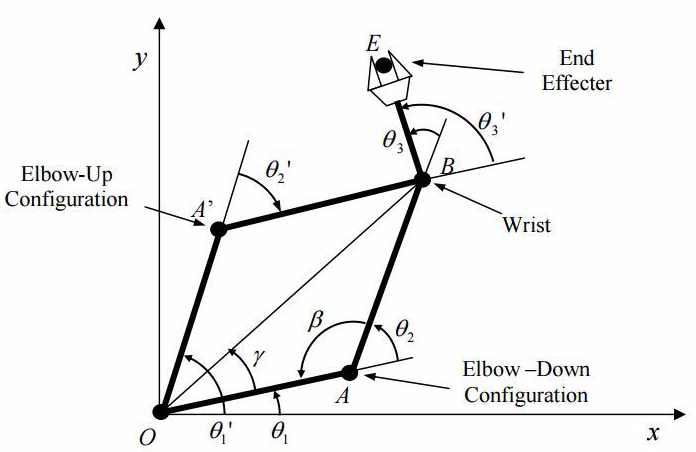

In the last post, we analyzed a planar robot manipulator with three degrees of freedom. To make things a bit simpler on ourselves, we’re going to look at a two degree of freedom manipulator.

Two Degree of Freedom Planar Manipulator, Source: Introduction to Robotics, H. Harry Asada

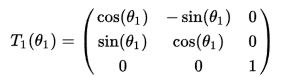

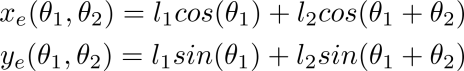

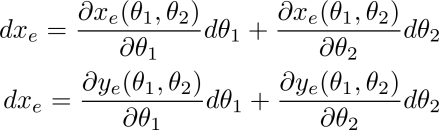

We can then describe the end effector position, xₑ and yₑ, in space in terms of the joint angles θ₁ and θ₂ as follows:

These two linear equations show that any change in either θ₁ or θ₂ will cause a change in xₑ and yₑ. Another way to think of it is that the end effector position is dependent on the variables of the joint angles. Recalling some calculus, we can take the partial derivatives of the above equations and describe the differential relationship between end effector position and joint angle as such:

We can write the above equation in a much more compact format by stating:

![]()

Note that:

,

,

The q vector is known as the system state. The J matrix is referred to as the Jacobian matrix. A Jacobian, mathematically, is just a matrix of partial differential equations. Since we’re engineers and roboticists, we like to make mathematicians angry and refer to the “Jacobian matrix of a manipulator that describes the velocity of the system and how it affects the end effector’s position” as just the “Jacobian”. Small tangent, sure, I’m only bringing it up to make you, the reader, aware that there are other types of Jacobians, anyways, back to the subject at hand. The Jacobian for the system shown above can is:

As I was mentioning above, the Jacobian represents the change of the end effector’s component position with respect to small changes in each joint angle. Now that we have this, we can begin to really apply some interesting control over our arm! Say that we have a robot arm that welds a joint together, and we need to ensure it moves in a particular straight line, with a very specific speed, we can use our new tool, the Jacobian to provide that level of control! Going back to the 2DOF arm shown above, say that we wanted to move the end effector in the x direction at a specific speed, let’s say to weld a straight line, we can formulate the dynamics of the system as follows:

![]()

As can be shown here, the Jacobian maps the relationship between joint velocities and end effector velocities.

Inverse Kinematics of Manipulators In Motion

In my last post, we not only discussed forward kinematics, but also inverse kinematics. That analysis was with a static end effector position and orientation in space. In the scenario described above, for the welding robot’s end effector moving in a straight line at the desired velocity, we can do some linear algebra and isolate the joint velocity matrix and obtain:

![]()

One very cool thing to note about this solution is that, mathematically, it can only have one solution, as long as the Jacobian is non-singular. In very mathy terms, a Jacobian drops rank and becomes non-invertible. Basically, the math doesn’t work, and the Jacobian doesn’t solve well, and it can cause the controller to tell the manipulator it needs to be at some impossibly high velocity, and the controller will try and follow suit, causing sometimes catastrophic results. It’s reasons like this that massive industrial robots are always behind huge cages and no humans are allowed into the work area.

Unfortunately, sometimes industrial robots fail and can cause the loss of human life. This is why collaborative robots (or CoBots) like Universal Robots, have become very popular in recent years. Source: https://www.presstv.com/Detail/2015/07/02/418396/Germany-Baunatal-Volkswagen-Hillwig

If we calculate the inverse Jacobian and multiply that by the decomposed (in terms of component directions) end effector velocity, vₑ, we will have the required individual joint velocities. That means to implement this on a robot, we will command the individual joint controllers to the computed joint velocities, from the above controller. This type of control is called Resolved Motion Rate Control, which can also be shown as:

This is a compact form to implement a resolved motion rate controller given a desired end effector position, and velocity, given feedback on the current position in space. The K term is gain term to help tune the controller.

Since the robot arm’s positions and velocities will change over time, the Jacobian’s computed values will change over time as well, depending on the arm configuration. The take away from this is that some higher level of coordinate will be required to ensure the controller feeds velocities in a smooth and controlled manner.

Let’s look at the above 2DOF arm. We want to move the robot arm from Point A (2, 0) to point B, C, then to finally D (0, 2). Note that each link of the arm below is the same length.

Example 2DOF Arm with desired waypoints for a full trajectory. Notice the curve showing the envelope of maximum reach. Anything along this line would cause a singularity for the arm. Source: Introduction to Robotics, H. Harry Asada

We can now state the joint angles, θ₁, θ₂, as follows:

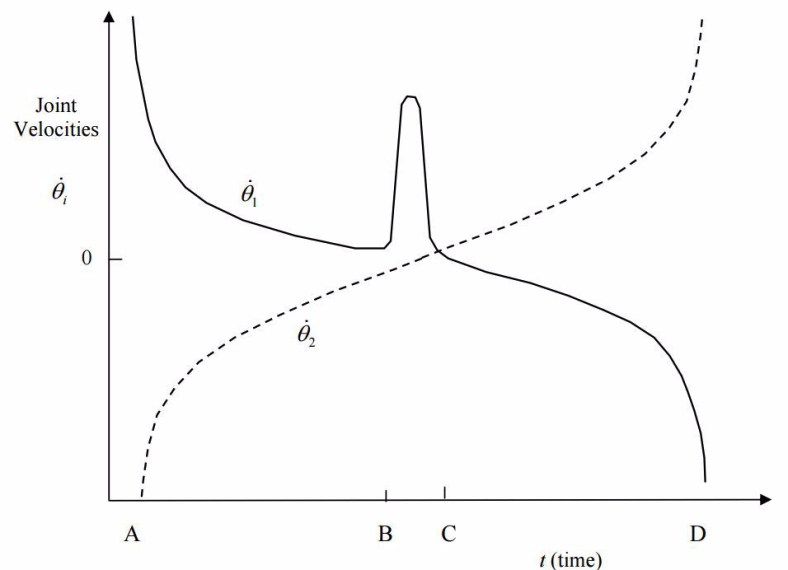

If we were to graph the joint velocities with respect to time as the arm moved from one point to another, the graph would look as follows:

The above graph shows the joint velocities of the arm as it moves through the waypoints with respect to time. Source: Introduction to Robotics, H. Harry Asada

A few things to note in the above graph. First, notice at how large the joint velocities are near the initial and final positions, A and D. This is due to the Jacobian saying that the joint needs to be at a specific position in a short period of time, causing such a high acceleration. In a real system, this could cause a robot to fault due to such high accelerations, another thing that is helping is that at these positions, θ₂ is at or approaching 0. This causes the mathematical issues described above in Jacobian Singularities.

Moving from one extreme to the other, as we approach B, and move to C, we see θ₁ accelerate quickly to allow the end effector to take the shortest path between points B and C. In order to reach this, the joint is almost -180 degrees, which is a singularity for this configuration.

For the interested reader, I highly suggest Stanford’s Intro To Robotics Lectures. The following lecture is about Jacobians in a much finer detail:

Computing Jacobians with ROS

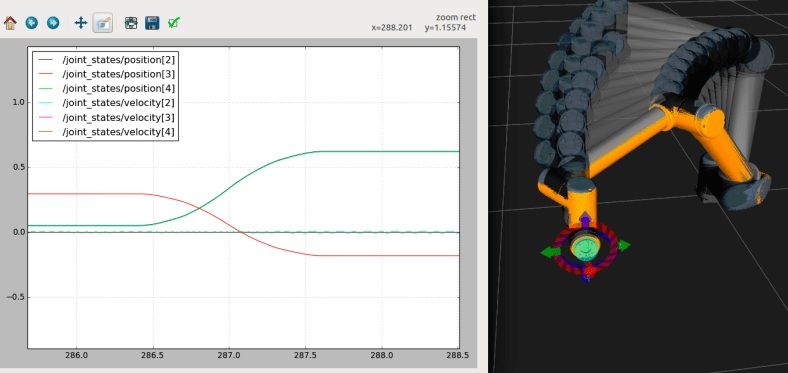

Last week, I showed you how to move a simulation robot from one point in space to another using MoveIt. If you wish to continue with this example, you will need to follow last week’s tutorial and add the proper packages to your catkin workspace.

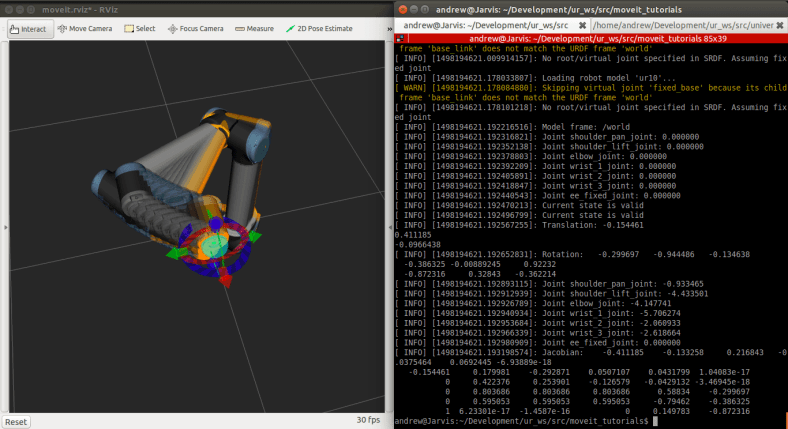

Jacobians are dynamic things. They aren’t easy to visualize like a starting point or an ending point, or even the waypoints in between. They’re intertwined in all of the above, so the only real way to understand them is to experiment with MoveIt and visualize it.

The above image shows a Universal Robot UR10 moving between to waypoints, and a looking at how the velocity profile changes with positions.

To run the above tutorial configuration, you will need to open up four terminals and run:

$ roslaunch ur_gazebo ur10.launch

$ roslaunch ur10_moveit_config ur10_moveit_planning_execution.launch sim:=true

$ roslaunch ur10_moveit_config moveit_rviz.launch config:=true

With that up and running, you should see the same Gazebo Simulation Window as well as Rviz window with the UR being shown and the MoveIt Config window ready for you to plan paths!

In the final terminal you will run:

$ rqt_plot /joint_states/position[2], /joint_states/velocity[2], /joint_states/position[3],/joint_states/velocity[3], /joint_states/position[4], /joint_states/velocity[4]

You can always add or delete which joints you want to track, this is just to get you up and running.

You should then see a window similar to the one in the image above showing the position and velocity of joints as time goes on.

In ROS, most data is exposed as a topic and transferred via messages. We can learn a little more about how ROS encodes these joint state topics by running:

$ rosmsg show JointTrajectory

It should return the structure of a JointTrajectory message:

[trajectory_msgs/JointTrajectory]:

std_msgs/Header header uint32

seq

time stamp

string frame_id

string[]

joint_names

trajectory_msgs/JointTrajectoryPoint[] points

float64[] positions

float64[] velocities

float64[] accelerations

float64[] effort

duration time_from_start

This is the topic that Rviz/MoveIt and others use to update the virtual robot’s position in space when new information is sent up from a real robot (or our case, a simulation robot, but to our control software, it’s real).

This next section is going to go over the MoveIt API a bit further to get you more into the trenches with the data available with MoveIt. With that API we will be able to print out the UR10’s Joint states, end effector state in Cartesian space, as well as the Jacobian matrix for this robot! To do so, you will need to navigate back to your catkin workspace and clone the following:

If you are on ROS Kinetic:

$ git clone https://github.com/atomoclast/moveit_tutorials.git --branch ur10_kinetic

If you are on ROS Indigo:

$ git clone https://github.com/atomoclast/moveit_tutorials.git --branch ur10_indigo

You should just build the workspace again, then you should be able to run:

If you are on ROS Kinetic:

$ rosrun moveit_tutorials kinematic_model_tutorial

You should then see something similar to my screen:

Expected output from the node. Note the Jacobian Matrix on the bottom.

Below is the block of code that is of real interest. It shows how we use the getJacobian() method from the MoveIt API to print the Jacobian.

Eigen::Vector3d reference_point_position(0.0,0.0,0.0);

Eigen::MatrixXd jacobian;

kinematic_state->getJacobian(joint_model_group, kinematic_state->getLinkModel(joint_model_group->getLinkModelNames().back()),

reference_point_position,

jacobian);

ROS_INFO_STREAM("Jacobian: " << jacobian);

You should try and move the robot in a variety of positions and plans and see how it effects the Jacobian. The matrix is rather large, but as you see, it will be different every time!

Have fun!

For my next post, I’m thinking of some fun computer vision/machine learning projects/tutorials I can post! Stay Tuned!

Liked what you saw? Subscribe for more cool how-tos, side projects, and robot stuff!

Have a comment, issue, or question? Leave a comment or message me on GitHub.